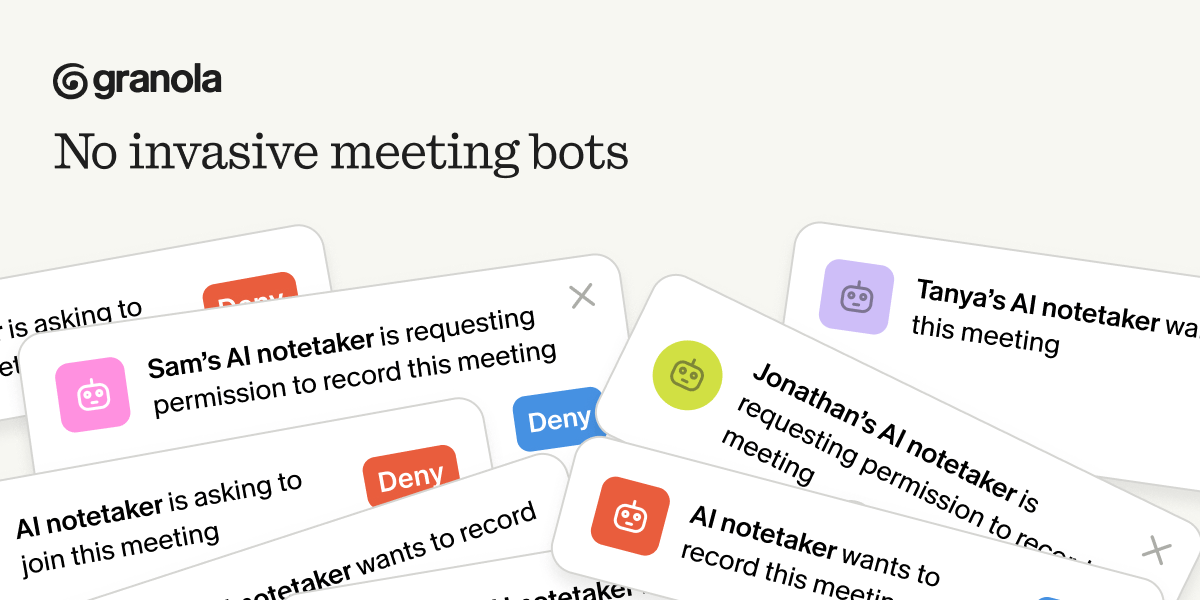

No bots. No awkward intros. Just great notes.

Ever had a meeting where a random bot joins the call and suddenly everyone’s distracted?

Granola works differently. No meeting bots. Nothing joins your call.

It transcribes directly from your device’s audio; on your computer or phone. Works with Zoom, Google Meet, Microsoft Teams, and even in-person conversations.

You stay focused. Jot down notes like you normally would. Granola quietly handles the rest in the background.

Want to be extra thoughtful? You can auto-send a quick consent note beforehand, too.

iPrompt

DEEP DIVE · IPROMPT #133 COMPANION

Why 2026 Is the Year AI Became a Utility

The software era ended last month. Here’s what comes next.

When a data centre runs out of power, they call it a brownout. When Anthropic ran out of compute last month, they called it “adaptive tuning.”

Last Wednesday’s iPrompt laid out the evidence: an AMD director’s 6,852-session audit showing Claude’s reasoning depth had collapsed 73% since January. Amazon’s emergency $33 billion cheque to Anthropic, locking in 5 gigawatts of AWS capacity. Google splitting its next-gen TPU into two separate chips because one can no longer carry both training and inference. An OpenAI internal memo calling Anthropic’s compute position a “strategic misstep,” operating on a “meaningfully smaller curve” than rivals.

The question now isn’t what happened. It’s what it means.

From software to utility

For two years, the implicit deal was software’s deal. Build it once, serve it a million times, and the marginal cost of each additional user approaches zero. Prices fall, capabilities rise, and the assumption holds: pay the same tomorrow, get at least what you got today.

That deal broke in Q1 2026.

The right analogy now is electricity. When you flip a light switch, the power reaching your home isn’t identical to the power reaching your neighbour’s. It’s routed from whichever generator has the cheapest available capacity at that moment, through infrastructure built decades ago on bets about peak demand. Prices vary by geography, time of day, weather. Service degrades — brownouts, rolling cuts, peak-hour restrictions — without the utility officially “changing” anything.

That’s where AI is now.

THE EVIDENCE When Anthropic dropped Opus 4.6’s default effort level from “high” to “medium” on 3 March, no capability disappeared. The weights didn’t change. What changed was the infrastructure parameters — how much compute gets allocated per request, when, for whom. An independent benchmarking firm called Marginlab — set up specifically because it didn’t trust Anthropic’s self-reporting — logged the flagship’s SWE-Bench-Pro pass rate dropping from 56% to 50% between March and 10 April. Same model, same price, different service. |

OpenAI’s leaked memo was self-interested reporting. Amazon’s $33 billion cheque was not.

We’ve been here before — 2012

This isn’t the first time a compute category transitioned from software to utility. The last time was cloud.

Between 2006 and 2011, AWS was sold as infinite scale. Enterprises built around that promise. You wrote software once, ran it on-demand, and the cloud would grow with your workload. Capacity was assumed.

That assumption broke between 2012 and 2014. Developers who’d never thought about physical infrastructure started hitting InsufficientInstanceCapacity errors in US-East-1. Availability zones had ceilings. Specific instance types ran out during peak hours. Multi-AZ architecture — once a niche concern for the reliability-paranoid — became default enterprise hygiene. Reserved Instances shifted from a cost-saving tactic to a strategic procurement category. You didn’t just want the discount; you wanted the capacity guarantee.

By 2015, the companies that had built durable cloud businesses had one trait in common: they’d treated AWS not as software but as a utility from early on. They had fallback regions. They had multi-cloud contracts. They had reserved capacity for predictable load and spot pricing for the flexible stuff. They’d written architecture for a world where no single provider could be trusted to scale with them.

The operators building on AI in 2026 face the same transition at a new layer of the stack. The same mistakes will punish them. The same mitigations will save them.

Three second-order effects

If the cloud parallel holds, three things will matter for AI operators that didn’t matter a year ago.

Geography becomes a quality dimension. In the cloud era, multi-region architecture started as a reliability concern and ended as a performance concern — US-East-1 carried the most load and saw the most throttling. Expect the same in AI. If your API calls route to a region with older silicon during peak hours, your outputs will measurably differ from a colleague whose calls route to a Trainium3 cluster. No lab will advertise this. Some readers are already experiencing it without realising.

Tier creep is coming. AWS evolved from single-tier on-demand into Reserved Instances, Spot, and Dedicated pricing — different commitment and capacity profiles for different willingness-to-pay. The frontier labs will follow. Expect service tiers defined by silicon access, not features. Amazon is already offering Anthropic-native access inside AWS with priority routing; Google and Azure will counter. The lab that gets ahead of this with transparent tiering keeps its enterprise customers. The lab that pretends it isn’t happening loses them fastest.

Silent degradation becomes the default failure mode. In the cloud era, InsufficientInstanceCapacity at least returned an error. AI’s version is worse — the model still responds; the response is just quietly worse. Outages you can diagnose. Silent throttling — adaptive thinking dropping from “high” to “medium,” default effort levels tuned without announcements, peak-hour performance bands — you can’t. Unless you’ve built detection into your workflow.

What operators should do now

If AI is load-bearing in your work, three moves protect you from the transition.

01 Build detection.

Run a fixed, calibrated prompt against your primary model weekly — the same inputs, the same structure, saved outputs. When the model slips, you’ll see it in the artefacts before you feel it in your work. (We call this the Silent Throttle Check; the full prompt is in this week’s iPrompt.)

02 Build diversification.

Know your second and third-choice models for every task you depend on. Not in theory — tested. Run the same prompt against Gemini, Grok, or DeepSeek before you need to. Services like OpenRouter let you route API calls across every major provider from one endpoint, with automatic fallback when a model fails. The Three-Model Rule is the operational shorthand.

03 Build scepticism.

Keep a changelog. When a lab updates defaults without announcing it — as Anthropic did with Opus 4.6’s effort level on 3 March — log the date, the model, the observable change. Private trackers become public pressure. The September 2025 degradation crisis only earned an Anthropic postmortem because enough developers had kept receipts to make denial untenable.

One model is a bet. Three is a portfolio.

Where this goes next

The utility frame isn’t bearish on AI. It’s a statement about which assumptions still hold.

The models will keep getting better. The frontier will keep moving. Costs will keep falling in aggregate. But the idea that your subscription fee buys you a consistent product from a predictable provider, independent of what’s happening elsewhere on the grid — that assumption broke this quarter.

PREDICTION By Q4 2026, at least one frontier lab will publicly announce tiered service levels based on compute region — premium subscribers routed to the fastest silicon, free users deprioritised at peak. By Q2 2027, expect the first enterprise lawsuit over undisclosed model degradation. By year-end 2027, the first third-party model-grade certification service — essentially Underwriters Laboratories for AI quality — will emerge as a response to the trust gap. |

The cloud operators who built durable businesses in the early 2010s weren’t the ones who picked the smartest provider in 2010. They were the ones who stopped believing any single provider would scale with them — early, before the capacity shocks made it obvious. They wrote their architecture for a world that hadn’t arrived yet, and by the time it did, they were already operating in it.

A decade later, at a new layer of the stack, the same move is available. The operators who take it now are the ones you’ll be reading about in 2028.

iPrompt · The AI newsletter that turns news into action.